Synoros Foundry R8-192

Maximum VRAM per dollar in an 8-GPU chassis

Budget-conscious buyer who needs raw VRAM capacity over speed / $4,000-$7,000

Total VRAM

192GB

GPUs

8x Tesla P40 24GB

Chassis

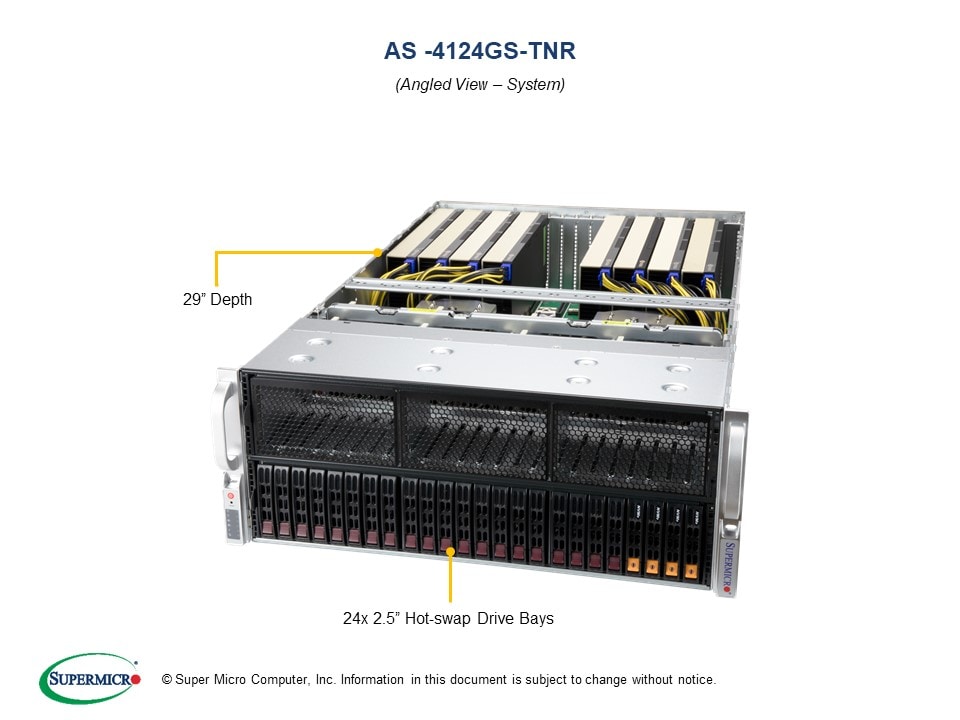

Used 8-GPU DDR4 Server (Supermicro/Dell class)

Form Factor

4U Rackmount

PCIe

PCIe 3.0

Starting at

$6,399

Model Compatibility

7B

13B

24B-35B

70B

122B

400B+

Sweet spot: 122B low-quant with 8-way parallelism

Stretch: 400B experiments with aggressive quantization + CPU offload

Notes

•P40 has no tensor cores and weak FP16. This is a capacity play, not a speed play

•8x250W passive cards = 2kW GPU draw alone. 208/240V mandatory

•PCIe 3.0 host limits load/offload speed across all 8 cards

•Older compute architecture. Inference speed per token is slower than Ampere/Volta equivalent VRAM

⚡240V required. 8x250W GPUs + dual Xeon/EPYC host = ~2.5-3kW steady state.

PCIe: Gen3-on-Gen3: capacity monster, not a speed build

Upgrade path: Synoros Foundry R4-192A or R4-320

Configure Your Build

Select upgrades to see live pricing.

Replace P40s with V100 32GB (better compute, same socket)included

Freight shipping, calculated at checkout

Powered by Stripe. Ships within the listed lead time.

Need something different? Contact sales for a custom build